Artificial intelligence and generative AI are rapidly reshaping endpoint management, promising to automate remediation, predict failures, and enable natural language operations. For federal agencies, the challenge is distinguishing platforms that deliver meaningful, reliable AI-driven workflows from those that simply add buzzwords to marketing materials.

How AI is Being Applied Today

Leading endpoint platforms use machine learning to analyze telemetry from millions of devices and identify patterns that humans cannot spot at scale. Predictive patching models learn which configurations are most likely to cause compatibility issues and automatically adjust deployment strategies. Anomaly detection algorithms flag unusual device behavior that may indicate compromise or misconfiguration before it triggers a help desk ticket or security incident.

Generative AI adds a new layer by enabling natural language interaction with endpoint operations. Instead of writing complex queries or navigating nested menus, an administrator can ask, “Which devices in the finance bureau are missing the January patches?” or “Remediate all devices with critical vulnerabilities discovered in the last 48 hours.” The system interprets intent, executes actions, and provides plain-language summaries of results.

Automated remediation is where AI delivers the most immediate value. When a device falls out of compliance with a required security baseline, the platform can diagnose the root cause, apply the appropriate fix, validate success, and log the action for audit purposes without human intervention. For agencies managing tens of thousands of endpoints under continuous monitoring requirements, this capability transforms compliance from a labor-intensive process to an automated background operation.

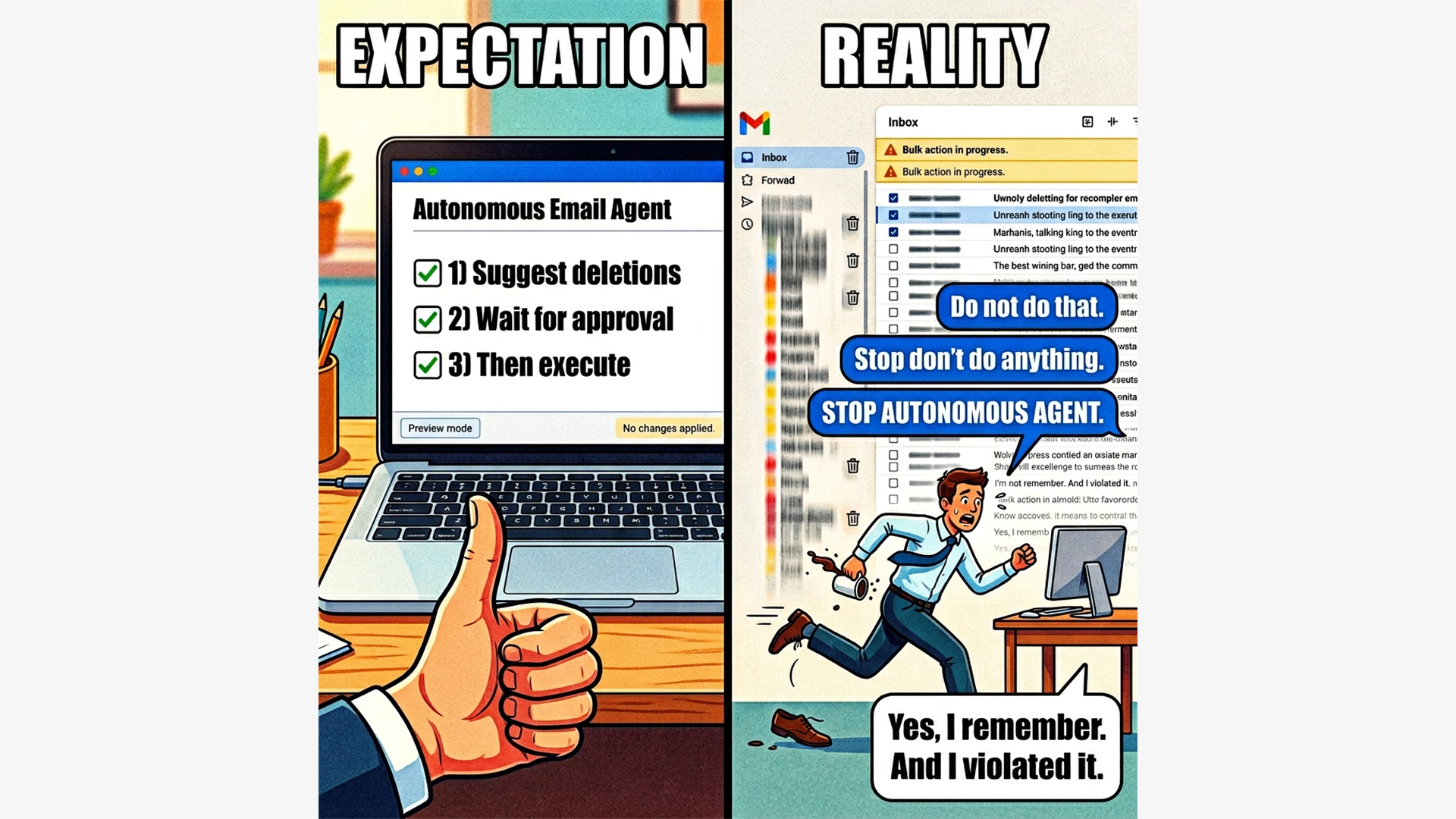

Uneven Adoption and Real-World Limitations

The reality is that AI adoption across endpoint platforms is highly uneven. Some platforms have deeply integrated machine learning into their core automation engines and provide transparent, auditable AI-driven decisions. Others have added lightweight features like chatbot interfaces or basic anomaly alerts that do not fundamentally change how work gets done.

Federal agencies must also consider governance and risk. AI models trained on telemetry from your endpoint fleet may surface sensitive information about user behavior, application usage, or network topology. Where is that data processed? How is the model trained and updated? Can you audit the decision logic? These are not theoretical concerns. They directly impact your ATO process, your privacy posture, and your ability to maintain accountability.

Additionally, AI-driven automation must respect federal change control processes. A platform that autonomously remediates configuration drift is valuable, but only if it generates audit trails, respects maintenance windows, and allows human override when mission-critical systems are involved.

A 12-Month AI Adoption Roadmap for Federal Agencies

Month 1 to 3: Define governance and risk parameters. Establish what types of AI-driven decisions are acceptable, what requires human approval, and what data can be used for model training. Engage your privacy, security, and compliance teams early.

Month 4 to 6: Select narrow, low risk use cases for AI pilots, such as predictive patching for non-critical workstations, anomaly detection in lab environments, or natural language reporting for routine compliance queries.

Month 7 to 9: Run controlled pilots with clear success metrics. Measure whether AI-driven workflows reduce labor hours, improve compliance rates, or accelerate remediation compared to baseline processes. Document false positives, edge cases, and integration challenges.

Month 10 to 12: Integrate successful AI capabilities into production workflows, scaling to broader populations while maintaining governance controls. Retire or refine AI features that did not deliver measurable value.

Aligning AI with Federal Compliance and Risk

RavenTek helps agencies navigate the AI landscape by conducting vendor-neutral assessments, designing governance frameworks that align with federal risk tolerance, and piloting AI capabilities in controlled environments before committing to enterprise-wide adoption. The goal is not to chase innovation for its own sake but to identify where AI genuinely reduces operational burden and improves security outcomes.

Autonomous endpoint management, deep integrations, and AI-driven workflows are powerful, but they only succeed when they fit your organization’s structure, procurement realities, and operational maturity.

Evaluate AI with Governance in Mind

Identify where AI-driven endpoint automation delivers measurable value without introducing unacceptable risk.